- Blog

- Flume instagram vs

- Free download manager firefox extension

- Epson stylus photo r1800 clean print heads

- If you install mac os on windows can you facetime

- Quicken 2018 premier for mac download

- Computer graphing software free

- Have a nice life dan barrett

- Download cisco anyconnect mobility client for windows 7

- Download microsoft office 2013 full crack khong can key

- Fender guitar serial number lookup

- Free -vob file converter for pc

- Flume instagram vs install#

- Flume instagram vs software#

- Flume instagram vs download#

- Flume instagram vs mac#

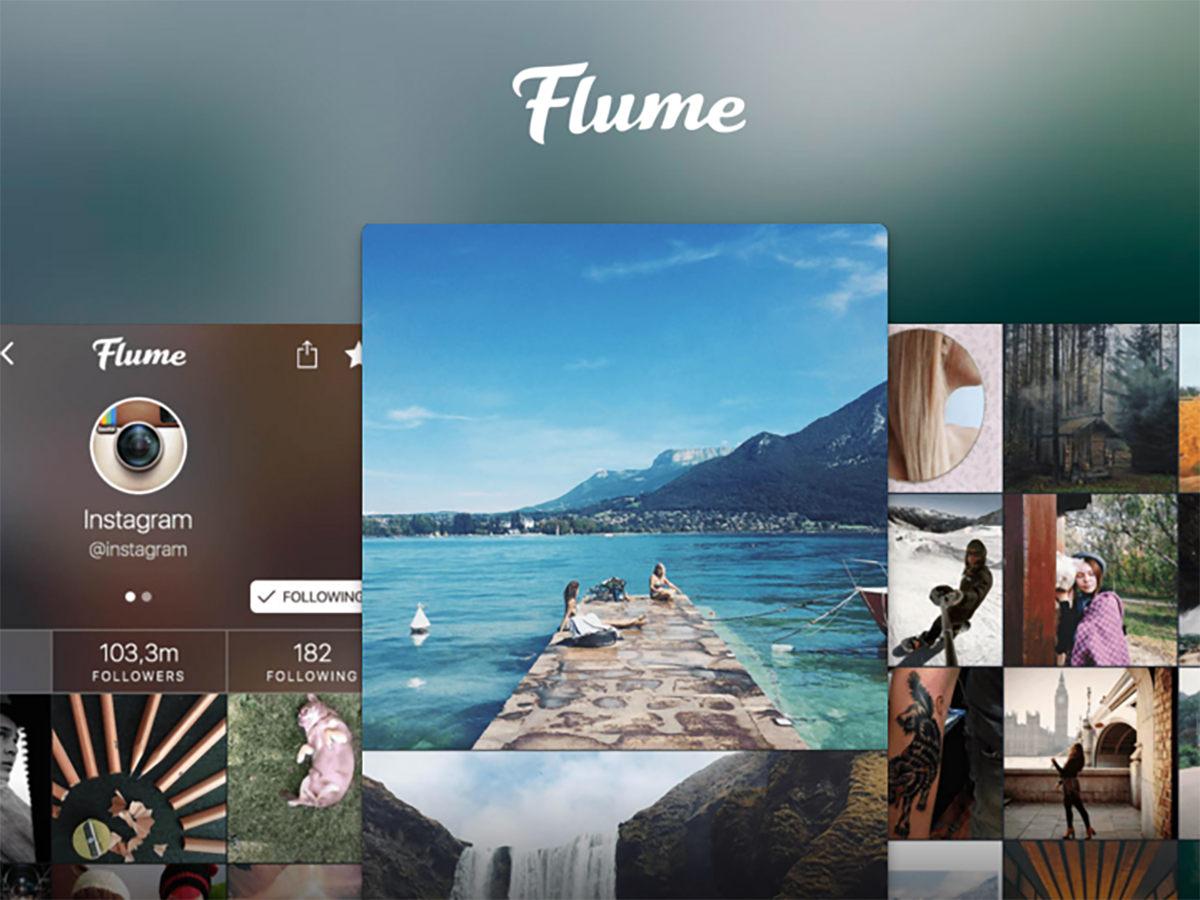

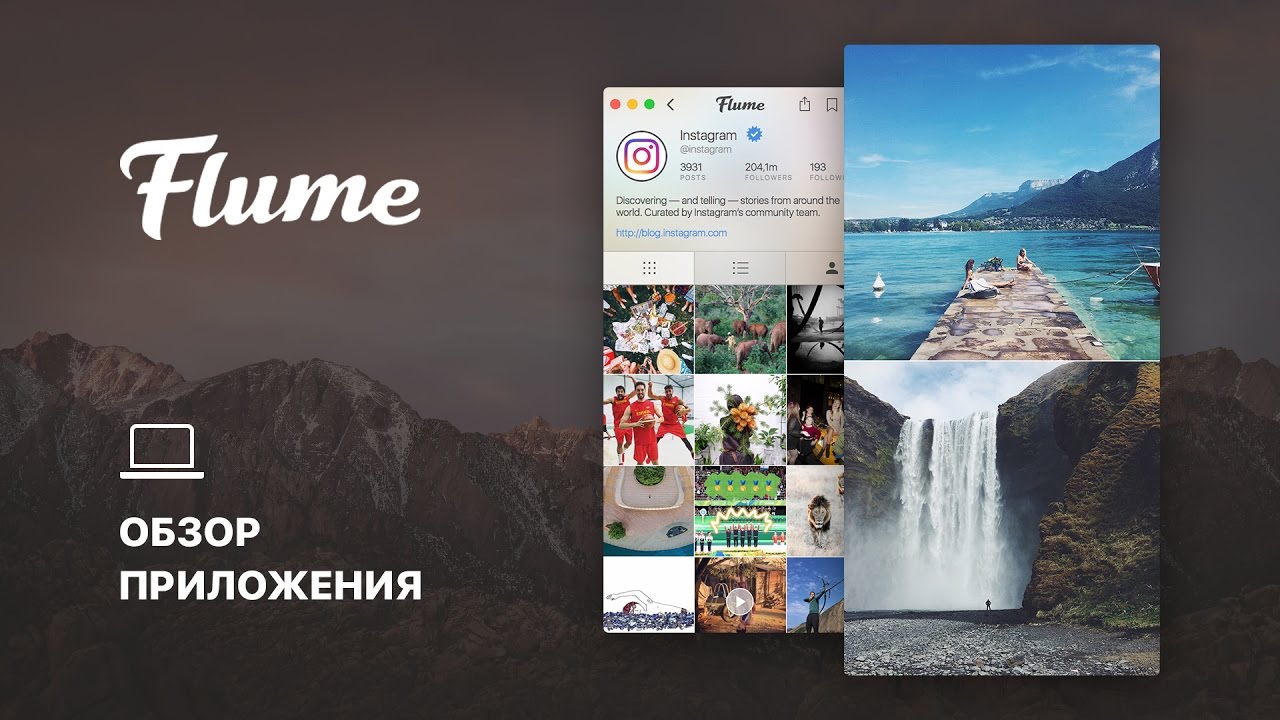

Flume’s interface for uploading posts.įlume supports uploading the following formats: Schedulerįlume allows you to upload photos and videos, write captions, tag users, set cover frames, crop and resize your content, and even share with other linked social media accounts (outside of Instagram).

You can even set up notifications so that you are alerted on your desktop every time someone sends you a DMs, even if the Flume app isn’t open.

Flume instagram vs download#

In your conversations, you can type your message, attach photos or videos, take your own photos or videos (from your webcam), include emojis, bookmark your conversation, share posts, locations, hashtags, and profiles, download shared photos, Like messages, view message timestamps, translate messages, and unsend messages. Start conversations by searching for Instagram users just like you would on your phone.

Flume instagram vs mac#

You can also access everything you would normally access on your phone, including:įlume allows you to send, receive, and manage your DMs from your Mac desktop. You can view your feed in a 3×3 or 1×1 grid.

Flume instagram vs software#

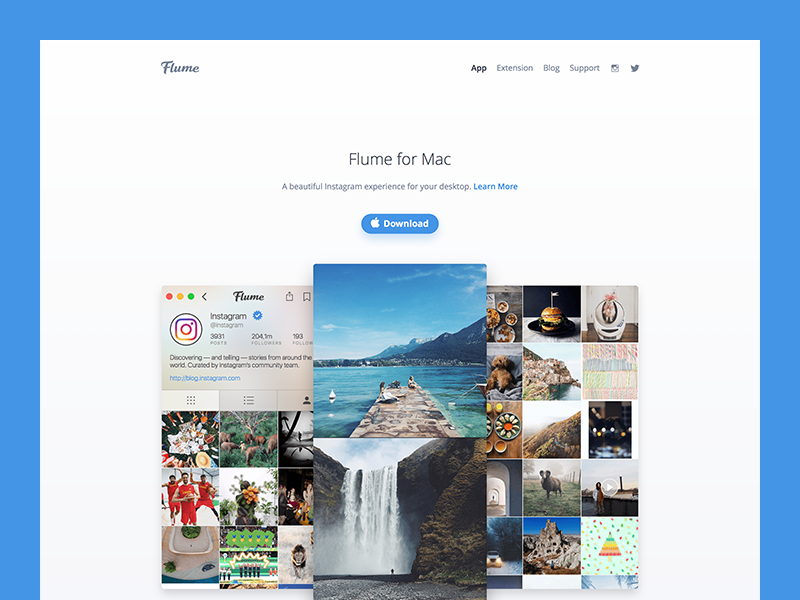

Start by going to and downloading the Flume software (Flume is only available to Mac users). AiGrow – a comparison of two Instagram growth services Setup Flume

AiGrow – a comparison of two Instagram growth services If they can be different then why flume requires the agent to be run on the same machine with the data source? So I wish someone more experienced can give me some lights on that. Also I am wondering if Kafka Connect should be deployed on the same machine with the data source machines or if it is ok they resides on different machines. So I won't need advanced connectors which is not supported in apache version of Kafka.īut I am not sure if I am understanding the usage or scenario of Kafka Connect the right way. Beside, we can avoid installing flumes on machines belonging to others and avoid the risk of incompatible environment to ensure the stable ingestion of data from every remote machine.īesides, the most ingestion scenario is only to ingest real-time-written log text file on remote machines(on linux and unix file system) into Kafka topics, that is it. It looks with Kafka Connect we can deploy it in a centralized way with our Kafka cluster so that the develops cost can go down.

Flume instagram vs install#

Another reason for the consideration is that the machines' os environment varies, if we install flumes on a variety of machines, some machine with different os and jdks(I have met some with IBM jdk) just cannot make flume work well which in worst case can result in zero data ingestion.But if we use flume we need to install the agent on each remote machine which generates tons of workload for further devops, especially at the place where I am working where the authority of machines is managed in a rigid way that maintaining utilities on machines belonging to other departments.The reason why I am considering the switch can be concluded mainly into: Now in my working scenario, now I am considering replacing the architecture of the our real time data ingestion platform which is currently based on flume -> Kafka with Kafka Connect and Kafka. I have been looking into the concepts and application of Kafka Connect, and I have even touched one project based on it in one of my intern.